Traditional recommendation systems represent users and items as dense vectors and learn to align them in a shared latent space for relevance estimation. Recent LLM-based recommenders instead leverage natural-language representations that are easier to interpret and integrate with downstream reasoning modules.

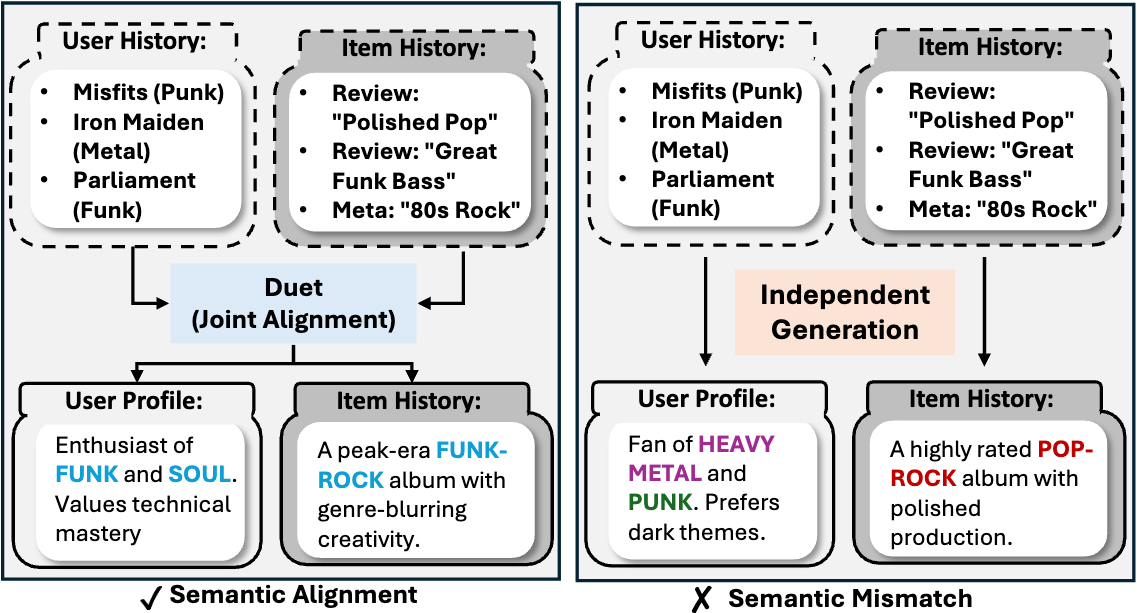

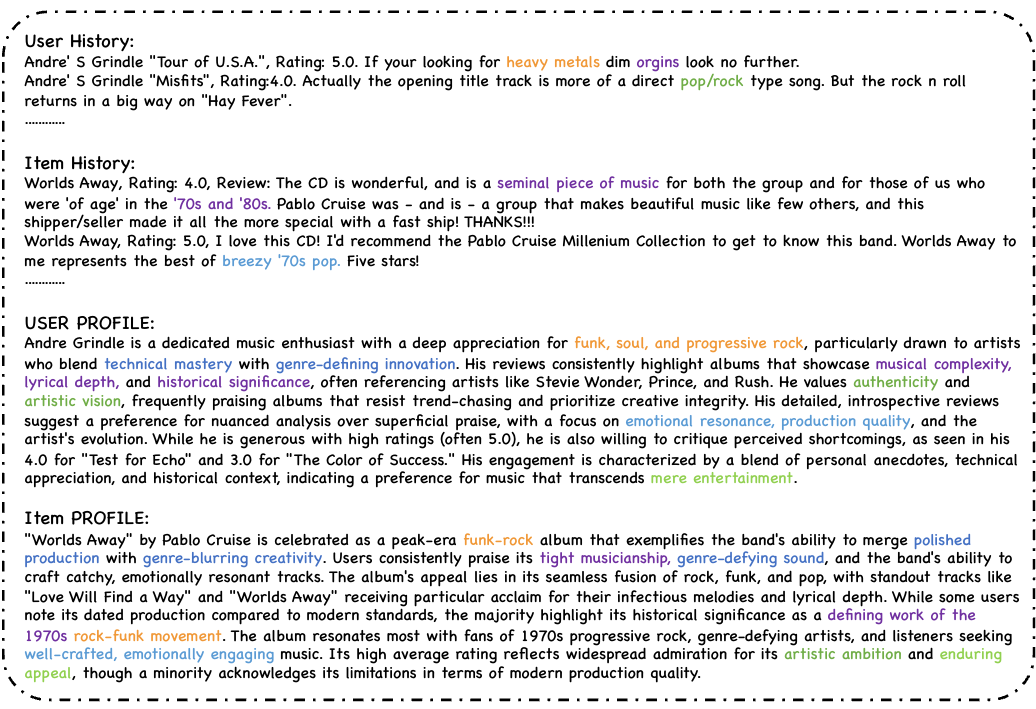

This paper studies how to construct effective textual profiles for users and items, and how to align them for recommendation. A central difficulty is that the best profile format is not known a priori: manually designed templates can be brittle and misaligned with task objectives. Moreover, generating user and item profiles independently may produce descriptions that are individually plausible yet semantically inconsistent for a specific user–item pair.

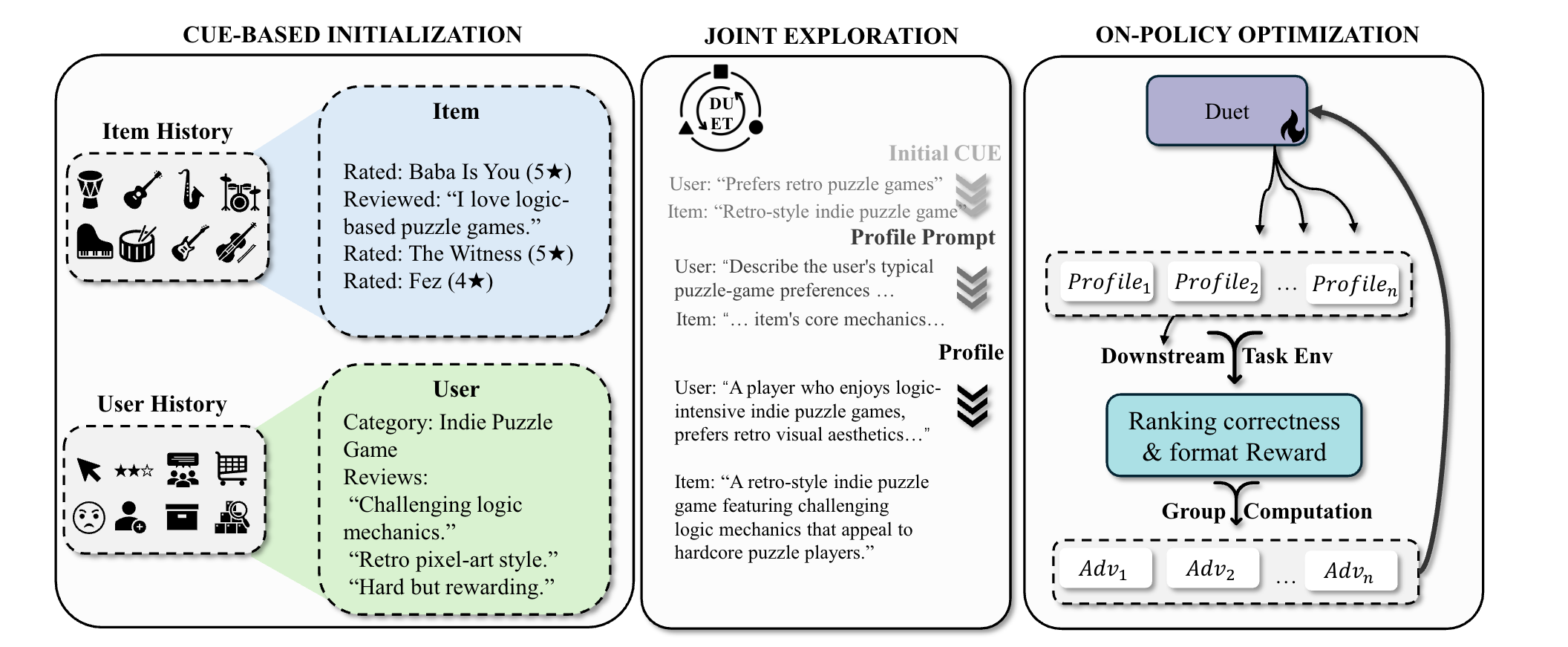

We propose DUET, an interaction-aware profile generator that jointly produces user and item profiles conditioned on both user history and item evidence. DUET follows a three-stage procedure: it first turns raw histories and metadata into compact cues, then expands these cues into paired profile prompts to generate profiles, and finally optimizes the generation policy with reinforcement learning using downstream recommendation performance as feedback.

Key result: Experiments on three real-world datasets (Yelp, Amazon Music, Amazon Books) show that DUET consistently outperforms strong baselines, demonstrating the benefits of template-free profile exploration and joint user–item textual alignment.